- Luật

- Hỏi đáp

- Văn bản pháp luật

- Luật Giao Thông Đường Bộ

- Luật Hôn Nhân gia đình

- Luật Hành Chính,khiếu nại tố cáo

- Luật xây dựng

- Luật đất đai,bất động sản

- Luật lao động

- Luật kinh doanh đầu tư

- Luật thương mại

- Luật thuế

- Luật thi hành án

- Luật tố tụng dân sự

- Luật dân sự

- Luật thừa kế

- Luật hình sự

- Văn bản toà án Nghị quyết,án lệ

- Luật chứng khoán

- Video

- NGHIÊN CỨU PHÁP LUẬT

- ĐẦU TƯ CHỨNG KHOÁN

- BIẾN ĐỔI KHÍ HẬU

- Bình luận khoa học hình sự

- Dịch vụ pháp lý

- Tin tức và sự kiện

- Thư giãn

TIN TỨC

fanpage

Thống kê truy cập

- Online: 7

- Hôm nay: 24

- Tháng: 14489

- Tổng truy cập: 5159753

Should AI be stopped? Experts call for "immediate pause" to research

More than 1,200 scientists and technology leaders have published an open letter calling for research on AI models bigger than GPT-4 to be halted amid concerns over unknown impacts and risks to society.

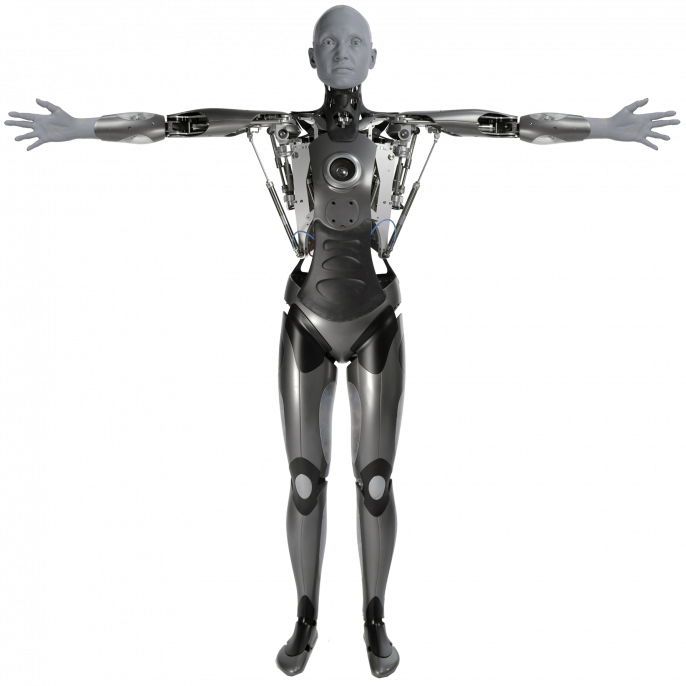

Recent months have seen an avalanche of progress in AI. From language models like ChatGPT and its successor GPT-4, to photorealistic image generation and video synthesis, to multimodal robotics, the latest advances are a step change above anything seen before. Public interest in AI has surged, as what once seemed like sci-fi is now rapidly moving into view.

Last week, Microsoft co-founder and philanthropist Bill Gates wrote on his blog: "The development of AI is as fundamental as the creation of the microprocessor, the personal computer, the Internet, and the mobile phone. It will change the way people work, learn, travel, get health care, and communicate with each other. Entire industries will reorient around it."

But while AI could provide huge benefits to humanity, the creation of such a powerful technology brings many risks and unknowns, potentially catastrophic if poorly managed. Like the invention of nuclear weapons, artificial general intelligence (AGI) could be world-altering – a genie-in-the-bottle that is difficult or impossible to put back.

Even ignoring the worst-case scenario of a Terminator-style apocalypse, other significant impacts may occur. With machines and software able to perform tasks previously done by humans, technological unemployment could surge in the years and decades ahead. Goldman Sachs has just published a report warning that 300 million jobs are likely to be disrupted in the near future.

Privacy and security might also be threatened by AI systems gathering vast amounts of personal data for processing by ever more sophisticated algorithms. Existing biases, fake news and discrimination in society could be perpetuated if the models contain flaws and inaccurate data.

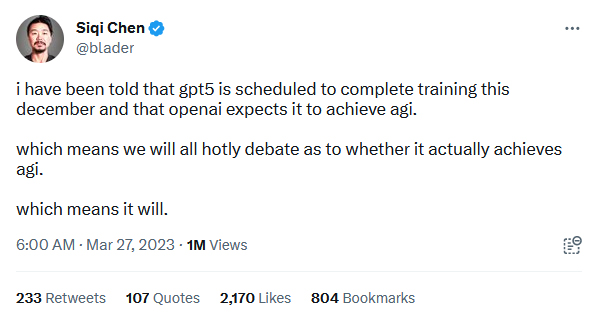

The current race to produce larger and larger models – some now reaching into hundreds of billions of parameters – has a growing number of people concerned. More than 1,200 scientists and technology leaders have published an open letter calling for an immediate halt to development of these large-scale models in order to assess their safety and risks. We have reproduced their statement in full below.

AI systems with human-competitive intelligence can pose profound risks to society and humanity, as shown by extensive research and acknowledged by top AI labs. As stated in the widely-endorsed Asilomar AI Principles, Advanced AI could represent a profound change in the history of life on Earth, and should be planned for and managed with commensurate care and resources. Unfortunately, this level of planning and management is not happening, even though recent months have seen AI labs locked in an out-of-control race to develop and deploy ever more powerful digital minds that no one – not even their creators – can understand, predict, or reliably control.

Contemporary AI systems are now becoming human-competitive at general tasks, and we must ask ourselves: Should we let machines flood our information channels with propaganda and untruth? Should we automate away all the jobs, including the fulfilling ones? Should we develop nonhuman minds that might eventually outnumber, outsmart, obsolete and replace us? Should we risk loss of control of our civilization? Such decisions must not be delegated to unelected tech leaders. Powerful AI systems should be developed only once we are confident that their effects will be positive and their risks will be manageable. This confidence must be well justified and increase with the magnitude of a system's potential effects. OpenAI's recent statement regarding artificial general intelligence, states that "At some point, it may be important to get independent review before starting to train future systems, and for the most advanced efforts to agree to limit the rate of growth of compute used for creating new models." We agree. That point is now.

Therefore, we call on all AI labs to immediately pause for at least 6 months the training of AI systems more powerful than GPT-4. This pause should be public and verifiable, and include all key actors. If such a pause cannot be enacted quickly, governments should step in and institute a moratorium.

AI labs and independent experts should use this pause to jointly develop and implement a set of shared safety protocols for advanced AI design and development that are rigorously audited and overseen by independent outside experts. These protocols should ensure that systems adhering to them are safe beyond a reasonable doubt. This does not mean a pause on AI development in general, merely a stepping back from the dangerous race to ever-larger unpredictable black-box models with emergent capabilities.

AI research and development should be refocused on making today's powerful, state-of-the-art systems more accurate, safe, interpretable, transparent, robust, aligned, trustworthy, and loyal.

In parallel, AI developers must work with policymakers to dramatically accelerate development of robust AI governance systems. These should at a minimum include: new and capable regulatory authorities dedicated to AI; oversight and tracking of highly capable AI systems and large pools of computational capability; provenance and watermarking systems to help distinguish real from synthetic and to track model leaks; a robust auditing and certification ecosystem; liability for AI-caused harm; robust public funding for technical AI safety research; and well-resourced institutions for coping with the dramatic economic and political disruptions (especially to democracy) that AI will cause.

Humanity can enjoy a flourishing future with AI. Having succeeded in creating powerful AI systems, we can now enjoy an "AI summer" in which we reap the rewards, engineer these systems for the clear benefit of all, and give society a chance to adapt. Society has hit pause on other technologies with potentially catastrophic effects on society (examples include human cloning, human germline modification, gain-of-function research, and eugenics). We can do so here. Let's enjoy a long AI summer, not rush unprepared into a fall.

The signatories (1,279 at the time of writing) include prominent scientists and industry figures such as Steve Wozniak (Apple co-founder), Elon Musk (CEO of SpaceX, Tesla, and Twitter, co-founder of OpenAI), Rachel Bronson (President, Bulletin of the Atomic Scientists), and Max Tegmark (Professor at MIT's Center for Artificial Intelligence and Fundamental Interactions, President of the Future of Life Institute).

On social media, however, others have opposed the letter – pointing out that if companies like OpenAI halt their research, hostile states and bad actors may then take advantage and leapfrog ahead.

Các bài viết khác

- CÁC CHUYÊN GIA DỰ ĐOÁN NỀN KINH TẾ 2024 - 2026 (07.08.2023)

- Tiêu điều mặt bằng cho thuê tại TP. HCM (24.06.2023)

- Vài nét Dự báo thời đại phục hưng và khai sáng của loài người sau đại dịch Corona-2019.7-2021(khởi đầu từ tháng 9 năm giáp thìn 2024) (25.06.2021)

- Từ sự kiện Tổng biên tập báo TIME Greta Thunberg là Nhân vật của năm 2019 đến báo cáo Biến đổi khí hậu Phúc trình của IPCC báo động đỏ cho nhân loại 82021 (15.01.2020)

- Khó phát triển điện khí LNG để giảm phát thải carbon (04.10.2023)

Yahoo:

Yahoo: